Not Ray Tracing at all it is AI-assisted image generation and interpolation.Optical Flow is not Ray Tracing. That's all people need to know to pick the claims apart.

Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

NVIDIA CEO Jensen Huang hints at ‘exciting’ next-generation GPU update on September 20th Tuesday

- Thread starter Comixbooks

- Start date

This is stated has being trivial and maybe I am a bit slow to not understand, but how so ?It does not advance Ray Tracing in any way, rather detracts quite destructively from it.

Also not yet reviewed and dissected for quality. So is Nvidia is at a stage where the created frame using AI replaces a whole rendered RT frame where each pixel had computation from shaders, RT cores? A whole new way of making frames just as good as a rendered frame? If not, will we or I be able to tell the difference, not get a migraine etc?Not Ray Tracing at all it is AI-assisted image generation and interpolation.

The simulation and RT aspect for future games is intriguing but unless Nvidia dishes out the content, with most AAA titles are designed around Console, makes this capability mostly unusable except for some developers.

One metaphor I got from Nvidia presentation is entanglement, Nvidia SDKs, tools , proprietary tech, hardware and so on. Do you or as a company really want to be partnered or dictated to by Nvidia? Reliant for Nvidia to fix their SDKs, progress on tools and expand coverage and keep up to date on progress made in medical, scientific endeavors, weather forecasting, drug R and D and so on? Restriced to Nvidia hardware with possible bans, lack of support if company has severe issues, want to go beyond Nvidia, source code to totally customize and use other solutions?

Waiting on real quality reviews, users feedback, AMD launch. Just more interested in a quality monitor. I usually get at least one GPU per generation, normally 2 or more. Have not skipped in over 2 decades, so far nothing here from Nvidia I need.

When you quote me like this, it would help to replace the demonstrative pronouns with what they're refering to. The frame generation in DLSS 3.0 is an interpolation (blend) of two 2D images using direction information for each pixel. This is all done outside of the game engine. True ray-tracing happens inside the game engine, because it needs access to all of the game's scene assets (lights, geometries, materials, etc.)This is stated has being trivial and maybe I am a bit slow to not understand, but how so ?

Not from my understanding, it is an extrapolation of the couple of last image with motion vector (of things including light).The frame generation in DLSS 3.0 is an interpolation (blend) of two 2D images using direction information for each pixel.

Yes I think I get what you mean, but nothing raytracing wise on the actual regular frame was hurt by this no ? How DLSS 3.0 degrading RTX ?True ray-tracing happens inside the game engine, because it needs access to all of the game's scene assets

NVidia's own words were "intermediate frame" meaning interpolation, even though their slides suggest extrapolation. However, input lag has be negated with Reflex, so it must be interpolation. The vagueness cannot be coincidence.Not from my understanding, it is an extrapolation of the couple of last image with motion vector (of things including light).

Yes I think I get what you mean, but nothing raytracing wise on the actual regular frame was hurt by this no ? How DLSS 3.0 degrading RTX ?

The implication that DLSS 3.0 increases RT performance is absurd. Plus, you're paying for something that doesn't advance ray tracing, that should have gone into real RT hardware.

You know that this technology has been around and available since 2018? It’s part of the original DLSS was improved in DLSS 2.0, and improved further in DLSS 3.0 which now uses specific hardware for it. It’s not new nor unproven, what it is now is far easier to actually implement from a development perspective and as such getting rolled into Unreal 4, 5, and Unity.Also not yet reviewed and dissected for quality. So is Nvidia is at a stage where the created frame using AI replaces a whole rendered RT frame where each pixel had computation from shaders, RT cores? A whole new way of making frames just as good as a rendered frame? If not, will we or I be able to tell the difference, not get a migraine etc?

The simulation and RT aspect for future games is intriguing but unless Nvidia dishes out the content, with most AAA titles are designed around Console, makes this capability mostly unusable except for some developers.

One metaphor I got from Nvidia presentation is entanglement, Nvidia SDKs, tools , proprietary tech, hardware and so on. Do you or as a company really want to be partnered or dictated to by Nvidia? Reliant for Nvidia to fix their SDKs, progress on tools and expand coverage and keep up to date on progress made in medical, scientific endeavors, weather forecasting, drug R and D and so on? Restriced to Nvidia hardware with possible bans, lack of support if company has severe issues, want to go beyond Nvidia, source code to totally customize and use other solutions?

Waiting on real quality reviews, users feedback, AMD launch. Just more interested in a quality monitor. I usually get at least one GPU per generation, normally 2 or more. Have not skipped in over 2 decades, so far nothing here from Nvidia I need.

The fact that there will no added input lag must mean extrapolation by definition and their slides seem somewhat clear like you said. The frame will be between 2 frame either way and would be intermediate frame either way. Not adding input lag require intelligence (there would be a risk for a real frame to be slowed down by a made up frame), which i imagine reflex help to handle.NVidia's own words were "intermediate frame" meaning interpolation, even though their slides suggest extrapolation. However, input lag has be negated with Reflex, so it must be interpolation. The vagueness cannot be coincidence.

Like DLSS 2.0, it is not that it increase in particularly RT performance, it is the ability to have good 4k quality at an actual lower resolution make playing with RT possible on a 4K screen, the same here, RT being real heavy specially with path tracing they started to support, it could make you go under your monitor VRR minimal frame from time to time, DLSS 3.0 will avoid that making it possible to use RT comfortably. At least from my understanding.The implication that DLSS 3.0 increases RT performance is absurd. Plus, you're paying for something that doesn't advance ray tracing, that should have gone into real RT hardware.

Blade-Runner

Supreme [H]ardness

- Joined

- Feb 25, 2013

- Messages

- 4,439

Yet here you are posting lengthy ignorant diatribes which appear to be primarily based on your personal views of consumer responsibility.LOL I am not indignant at all, you should be talking to people with all the outrage.

Somewhat ironic that you claim product information is so easily available for consumers but yet you are incapable of doing 2 minutes worth of googling yourself.And I would love for you to quote the regulatory and legal systems that Nvidia are breaking.

https://www.legislation.gov.uk/uksi/2008/1277/contents/made

https://www.legislation.gov.au/Details/C2022C00212

https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=celex:32006L0114

https://www.competitionbureau.gc.ca/eic/site/cb-bc.nsf/eng/03133.html

https://sso.agc.gov.sg/Act/CPFTA2003#Sc2-

How were they marketed? Where all the specs prominently displayed on the boxes? What other representations were included in the marketing material that might have made it possible to distinguish the products?How come they weren't fined or whatever for the 3080 12GB and the 3080 10GB or go back further to the 1060 6GB and 1060 3GB? The difference between those cards wasn't just VRAM. Different number of Cuda cores and memory bandwidth.

It is a question of facts and circumstances, and just because regulators haven't taken action in the past doesn't mean they won't in the future. All it takes is enough consumer complaints for them to take interest. Case in point, Valve got away for over a decade misrepresenting that Australian consumers were not entitled to refunds for broken software, until of course the ACCC successfully sued them.

https://www.accc.gov.au/media-relea...representing-gamers-consumer-guarantee-rights

Same with geoblocking in the EU.

https://www.ign.com/articles/valve-capcom-bethesda-fined-94-million-by-eu-for-geo-blocking

Or how come neither AMD or Nvidia have never been fined for rebranding old cards and releasing them as new cards.

And? What has this what-about'ism got to do with the issue at hand? What representations or statements were made in relation to those cards which were going to confuse consumers? These overly simplistic takes don't actually assist your position.

Categorically irrelevant - this is not an emotive statement based on personal views, there is plenty of case law where this exact point has been decided against marketers/sellers.The specs of both GPUs are on the site. You don't have to leave the shop or anything these days, just check on your phone.

Of course the additional irony is that you are implicitly conceding that the naming scheme is misleading, because if lazy retarded consumers didn't want to be misled by the name of the product they should have done some research beforehand.

See above. It is quite apparent that this is based on your personal views, in the real world consumers are entitled to assume truth in advertising. They are not obliged to do any research if they choose not to.And it's not about been snooty as you call it. If you don't have a clue about what you are buying, you should ask advice or do some research. If someone asked you about a new card coming out, would you tell them to buy it based on the Marketing blurb from the Manufacturer or would you tell them to wait for reviews?

No one might give a damn if the price is right, but the naming scheme would still technically be a problem. The main difference is that there would be less people inclined to lodge complaints if they feel that, notwithstanding that they thought they were getting a 4080 16gb with less memory, they can live with that because it was so much cheaper.The naming scheme isn't the problem, the price is. I bet nobody would give a damn about naming schemes if the 4080 12GB was $399 and the 16GB was $499.

That's the entire crux of the issue, the fact that they have changed the name to create the impression that the price hike is justified. The price they charge is a matter for them, but using marketing and naming to manipulate consumer sentiment and understanding of the product exposes them to legal issues.Serious question, do you think there would have been more or less outrage if they called the card the 4070? Almost a doubling of price from the 3070. The reaction would probably have been worse.

The naming scheme isn't the problem, the price is. I bet nobody would give a damn about naming schemes if the 4080 12GB was $399 and the 16GB was $499.

Serious question, do you think there would have been more or less outrage if they called the card the 4070? Almost a doubling of price from the 3070. The reaction would probably have been worse.

you're right but therein lies the issue...at $399 there wouldn't have been any outrage because they are placing it in the appropriate tier...the 4080 12GB is not a true 4080...so lowering the price would have solved that issue...but they are doubling down on the problem by calling it a 4080 and selling it for previous gen 80 series card prices

Tamlin_WSGF

2[H]4U

- Joined

- Aug 1, 2006

- Messages

- 3,123

I think one of us, and for all I know, perhaps me, are not getting noko´s point here. DLSS and other upscaling methods have never come without drawbacks. Thats the only proven thing. Otherwise, it has been more about "good enough to use". For this, its purely subjective and for many Nvidia and AMD users, FSR is good enough and in cases where there is no upscaling support, FSR can be enabled and give good enough (like Steamdeck, where you can have global FSR). DLSS 3 is for the moment unproven and untested in the wild. It might be great, giving more FPS then previous DLSS, while still keeping it "good enough". Remains to be seen.You know that this technology has been around and available since 2018? It’s part of the original DLSS was improved in DLSS 2.0, and improved further in DLSS 3.0 which now uses specific hardware for it. It’s not new nor unproven, what it is now is far easier to actually implement from a development perspective and as such getting rolled into Unreal 4, 5, and Unity.

Consoles are the lowest common denominator and games are often developed with whats "good enough" in mind on consoles. Sites like Hardware Unboxed and Digital Foundry have their videos on whats "good enough", "do you need Ultra" etc.

Raytracing is a great thing for realism, but haven´t been used in a meaningful way IMO in games to date. This might change in the future, but for now, I personally consider it a tacked on feature and a checkmark box.

DLSS is a great thing. It gives higher framerate for those that find it "good enough". But, its Nvidia only and though Nvidia have a huge markedshare and can get developers to implement it in their games, they are up against both Intel and AMD this time around. I mean, DLSS 3 is only supported by Nvidias 4XXX series, while Intel XeSS is supported even by Nvidia Pascal/1XXX series (same goes for FSR). FSR is even supported outside of games that have no ingame support. For all we know, DLSS is dead in a few years, sharing the grave of Nvidias GPU accelerated PhysX with the headstone "didn´t play nice with others, died alone".

I am more curious about rasterization performance and noise levels of the RTX 4XXX series. For DLSS and Raytracing, its more how well it works in VR and Nvidia have a good track record supporting VR, but that would only be the odd game now and then.

I also wait for reviews and AMDs offering.

I want AMD to come out strong because this is bullshit on the pricing and naming needs something to stand up against it and it's certainly not Intel this time around. But I fear AMD is just gonna ride the gravy train to the bank by being just as expensive.I think one of us, and for all I know, perhaps me, are not getting noko´s point here. DLSS and other upscaling methods have never come without drawbacks. Thats the only proven thing. Otherwise, it has been more about "good enough to use". For this, its purely subjective and for many Nvidia and AMD users, FSR is good enough and in cases where there is no upscaling support, FSR can be enabled and give good enough (like Steamdeck, where you can have global FSR). DLSS 3 is for the moment unproven and untested in the wild. It might be great, giving more FPS then previous DLSS, while still keeping it "good enough". Remains to be seen.

Consoles are the lowest common denominator and games are often developed with whats "good enough" in mind on consoles. Sites like Hardware Unboxed and Digital Foundry have their videos on whats "good enough", "do you need Ultra" etc.

Raytracing is a great thing for realism, but haven´t been used in a meaningful way IMO in games to date. This might change in the future, but for now, I personally consider it a tacked on feature and a checkmark box.

DLSS is a great thing. It gives higher framerate for those that find it "good enough". But, its Nvidia only and though Nvidia have a huge markedshare and can get developers to implement it in their games, they are up against both Intel and AMD this time around. I mean, DLSS 3 is only supported by Nvidias 4XXX series, while Intel XeSS is supported even by Nvidia Pascal/1XXX series (same goes for FSR). FSR is even supported outside of games that have no ingame support. For all we know, DLSS is dead in a few years, sharing the grave of Nvidias GPU accelerated PhysX with the headstone "didn´t play nice with others, died alone".

I am more curious about rasterization performance and noise levels of the RTX 4XXX series. For DLSS and Raytracing, its more how well it works in VR and Nvidia have a good track record supporting VR, but that would only be the odd game now and then.

I also wait for reviews and AMDs offering.

NightReaver

2[H]4U

- Joined

- Apr 20, 2017

- Messages

- 3,869

Let's be honest. Even if they do, so many people are quick to sling out every Nvidia marketing line possible as to why they can't even consider an AMD card.I want AMD to come out strong because this is bullshit on the pricing and naming needs something to stand up against it and it's certainly not Intel this time around. But I fear AMD is just gonna ride the gravy train to the bank by being just as expensive.

You'd think going based off online comment sections and forums that almost every home PC builder is a big time streamer who also wants to do some machine learning on the side. Also they only play DLSS/raytraced games.

Oh, and AMD drivers personally kicked their dog sometime in the past 10 years. Unforgivable.

Tamlin_WSGF

2[H]4U

- Joined

- Aug 1, 2006

- Messages

- 3,123

Lets cross our fingers and hope AMD doesn´t get too greedy this time! With their chiplet design, they might be able to undercut Nvidia in price and still make a decent buck instead of pocketing more.I want AMD to come out strong because this is bullshit on the pricing and naming needs something to stand up against it and it's certainly not Intel this time around. But I fear AMD is just gonna ride the gravy train to the bank by being just as expensive.

They are still kicking my dog on a number of my older drafting workstations but yeah.Let's be honest. Even if they do, so many people are quick to sling out every Nvidia marketing line possible as to why they can't even consider an AMD card.

You'd think going based off online comment sections and forums that almost every home PC builder is a big time streamer who also wants to do some machine learning on the side. Also they only play DLSS/raytraced games.

Oh, and AMD drivers personally kicked their dog sometime in the past 10 years. Unforgivable.

leather pant suits?Too much hopes and dreams. Lisa Su is cut from the same cloth as Jensen Huang.

imsirovic5

Limp Gawd

- Joined

- Jun 21, 2011

- Messages

- 346

Every corporation operates under same principles. Maximize growth and profit for shareholders so you can attract more capital and grow. Those who do not deliver profits will not attract capital. There is no good or bad - these are realities of capitalistic system.Too much hopes and dreams. Lisa Su is cut from the same cloth as Jensen Huang.

And for those who think this is evil, in a speech on Nov. 11, 1947, Sir Winston Churchill reminded the UK’s House of Commons that “democracy is the worst form of government, except for all those others that have been tried.” In a similar fashion, capitalism is the worst economic system, except for all the others.

Blade-Runner

Supreme [H]ardness

- Joined

- Feb 25, 2013

- Messages

- 4,439

Every corporation operates under same principles. Maximize growth and profit for shareholders so you can attract more capital and grow. Those who do not deliver profits will not attract capital. There is no good or bad - these are realities of capitalistic system.

And for those who think this is evil, in a speech on Nov. 11, 1947, Sir Winston Churchill reminded the UK’s House of Commons that “democracy is the worst form of government, except for all those others that have been tried.” In a similar fashion, capitalism is the worst economic system, except for all the others.

Unfortunately what we have in most Western societies is more akin to crony capitalism operating within corporate plutocracies. A discussion probably better suited for a different sub-forum.

Blade-Runner

Supreme [H]ardness

- Joined

- Feb 25, 2013

- Messages

- 4,439

D

Deleted member 162929

Guest

Flogger23m

[H]F Junkie

- Joined

- Jun 19, 2009

- Messages

- 14,504

I want a good cooler, but don't give two shits about LEDs or screens. I know marketing shows it sells, but I wish one brand would come out with a good cooling design that was minimalist and save on the cost. If they can bring it down $5-15 I would be happy. EVGA was the only one as of late to balance fairly good cooling with a more minimalist style and offer a good cooler without the extras (Black Editions).

Gideon

2[H]4U

- Joined

- Apr 13, 2006

- Messages

- 3,572

So it seems people are most upset about price @ 1600 for a flagship card. I am in Vegas this week for a conference where 2 people can easily spend $1600 in a single night for a night out at a good restoraunt and a show. Now that's a collosal waste of money. $1600 for a top GPU that will last 4 years equates to less than $40 per month + resale value. Would I love to pay $800 for a top end GPU, yes ofcourse. But have you all noticed inflation? I would love to pay half the price for gas like I did 2 years ago or get my steak at half the price like I did two years ago. Inflation sucks, so do new expensive nodes so do supply chain issues. But I thought this is a place where people are excited about tech. Like I would shit my pants back in 1990s when I had my first voodoo card if someone told me we would have this level of real time ray tracing or if someone explained to me tech behind DLSS or if someone showed me graphics in Cyberpunk in real time. We came a long way, and the road ahead is exciting as hell. I am just super happy to have lived through computer history from C64 to now to whatever will come over next few decades.

Yeah and you also would have shit yourself when I told you how much you had to pay for it. Nvidia paying way to much for wafers is not my problem, seems like theirs and they bet big that the mining train wouldn't end, looks like they crapped out on that one. No one is going to rush to buy a 4000 series when there is a glut of 3000 series cards for a far better deal. Your on a tech forum and only a handful of people have bought the Halo card from either company for just gaming. I expect Nvidia stock to crash if they don't rethink their plans on pricing, as the economy is no longer riding on a free wave of cash and putting off the rent and other bills is no longer a option. Were about to see what the real demand is for high end video cards.

Tamlin_WSGF

2[H]4U

- Joined

- Aug 1, 2006

- Messages

- 3,123

I checked and noticed that the selfish prick that made that video, didn´t upload it to Nexusmods.

Sweet tool. I also noticed that while it requires a RTX card to make a mod, its not locked to 4XXX series and the mod itself runs on all Vulcan compatible hardware:

https://www.nvidia.com/en-us/geforce/news/rtx-remix-announcement/NVIDIA RTX Remix requires a GeForce RTX GPU to create RTX Mods, while mods built using Remix should be compatible with any hardware that can run Vulkan ray-traced games.

I give Nvidia shit when they do shitty things, but in this case I give them only Kudos.

(and a bit kudos to AMD that made Mantle, which Vulcan is build upon, I hope everything supports Vulcan in the end, since its more platform agnostic).

KazeoHin

[H]F Junkie

- Joined

- Sep 7, 2011

- Messages

- 9,085

I'm going to make it my life's goal to remix the original Unreal in RTX.

Hold out for the 5000 cards.I'm going to make it my life's goal to remix the original Unreal in RTX.

That is when Jensen will start giving users the way its meant to be played money to mod games.

TaintedSquirrel

[H]F Junkie

- Joined

- Aug 5, 2013

- Messages

- 12,746

Remember when they announced the 30 series and prices immediately started crashing on the secondhand market?

Even after the eth merge there's no movement right now.

Just gotta wonder who is going to be buying these new cards...

Even after the eth merge there's no movement right now.

Just gotta wonder who is going to be buying these new cards...

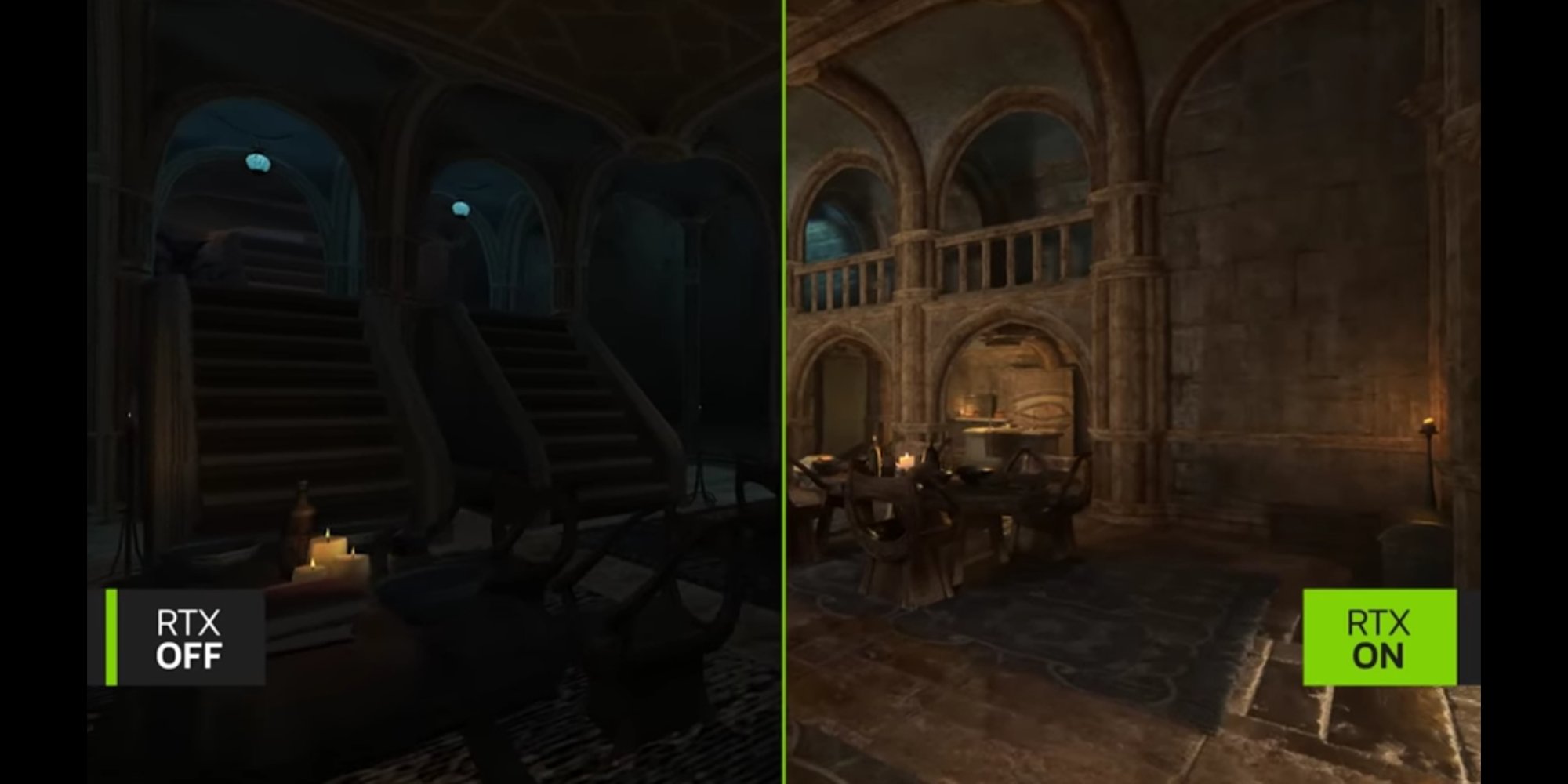

This is butchering of the intended look and atmosphere.

Deep Learning Super Sampling isn’t Ray tracing. DLSS is separate and independent of the DX12 Ray tracing libraries and doesn’t do any ray tracing. It exists solely as a tool for various image manipulation and management systems.When you quote me like this, it would help to replace the demonstrative pronouns with what they're refering to. The frame generation in DLSS 3.0 is an interpolation (blend) of two 2D images using direction information for each pixel. This is all done outside of the game engine. True ray-tracing happens inside the game engine, because it needs access to all of the game's scene assets (lights, geometries, materials, etc.)

Blade-Runner

Supreme [H]ardness

- Joined

- Feb 25, 2013

- Messages

- 4,439

Now tech media is starting to call Nvidia out....

https://www.digitaltrends.com/computing/why-the-rtx-4080-12gb-feels-like-rebranded-rtx-4070/

https://www.pcgamer.com/nvidia-rtx-40-series-let-down/

https://www.windowscentral.com/hard...ad-and-making-memes-of-nvidia-for-good-reason

I see some redditors are also grumbling that the 192 bit makes the 4080 12gb more akin to a 4060 based on previous gen naming and spec conventions. Is that true? (Edit: even the PCGamer article is suggesting this, and that it could be more accurately described as a 4060 Ti).

https://www.digitaltrends.com/computing/why-the-rtx-4080-12gb-feels-like-rebranded-rtx-4070/

https://www.pcgamer.com/nvidia-rtx-40-series-let-down/

https://www.windowscentral.com/hard...ad-and-making-memes-of-nvidia-for-good-reason

I see some redditors are also grumbling that the 192 bit makes the 4080 12gb more akin to a 4060 based on previous gen naming and spec conventions. Is that true? (Edit: even the PCGamer article is suggesting this, and that it could be more accurately described as a 4060 Ti).

Last edited:

Blade-Runner

Supreme [H]ardness

- Joined

- Feb 25, 2013

- Messages

- 4,439

Choose wisely....and do your research! Personally I am waiting for the RTX 4080 2GB.

Armenius

Extremely [H]

- Joined

- Jan 28, 2014

- Messages

- 42,765

You can't say that for certain. Morrowind ran on DX8 and it lacked a lot of the light rendering techniques we take for granted these days like light proliferation. The video does have issues similar to the Quake II RTX mod, though. The candlelight in the video is definitely too intense. Whomever was responsible for the direction of the mod in the video has probably never been in a room that was solely lighted by candlelight.This is butchering of the intended look and atmosphere.

kirbyrj

Fully [H]

- Joined

- Feb 1, 2005

- Messages

- 30,720

Now tech media is starting to call Nvidia out....

https://www.digitaltrends.com/computing/why-the-rtx-4080-12gb-feels-like-rebranded-rtx-4070/

https://www.pcgamer.com/nvidia-rtx-40-series-let-down/

https://www.windowscentral.com/hard...ad-and-making-memes-of-nvidia-for-good-reason

I see some redditors are also grumbling that the 192 bit makes the 4080 12gb more akin to a 4060 based on previous gen naming and spec conventions. Is that true? (Edit: even the PCGamer article is suggesting this, and that it could be more accurately described as a 4060 Ti).

Nvidia's f'd up business model is that the high end card represents the best value. Instead of letting the overachievers pay 50% more for the last 10% of performance, they are "offering for sale" a card priced $400 more than it should cost for no good reason.

This smells of Turing all over again. Looks like a pass on this generation until I get a super deal. Fuck Jensen.

Last edited:

Good. Unless the 4080 12GB is a quantum leap forward in terms of performance, it's not going to measure up based on price. I'm glad everyone else is now calling them out.Now tech media is starting to call Nvidia out....

https://www.digitaltrends.com/computing/why-the-rtx-4080-12gb-feels-like-rebranded-rtx-4070/

https://www.pcgamer.com/nvidia-rtx-40-series-let-down/

https://www.windowscentral.com/hard...ad-and-making-memes-of-nvidia-for-good-reason

I see some redditors are also grumbling that the 192 bit makes the 4080 12gb more akin to a 4060 based on previous gen naming and spec conventions. Is that true? (Edit: even the PCGamer article is suggesting this, and that it could be more accurately described as a 4060 Ti).

"they are making". As if there's a gun to anyone's head.Nvidia's f'd up business model is that the high end card represents the best value. Instead of letting the overachievers pay 50% more for the last 10% of performance, they are making the middle class gamers pay $400 more for no good reason..

This may seem like as a crazy concept but if I'm not interested in a product I just don't buy it.

kirbyrj

Fully [H]

- Joined

- Feb 1, 2005

- Messages

- 30,720

"they are making". As if there's a gun to anyone's head.

This may seem like as a crazy concept but if I'm not interested in a product I just don't buy it.

Ok...they are "offering for sale"...happy? Still doesn't change my opinion of Fuck Jensen and his 4060 disguised as a 4080 for $900 bull shit.

DooKey

[H]F Junkie

- Joined

- Apr 25, 2001

- Messages

- 13,581

The 4090 isn't priced too badly compared to its projected performance. The pair of 4080s are simply priced too high from what I can tell at this point. Maybe reviews will cool the situation off a bit, but I doubt it.

Your post.

Ok, You brought up that Nvidia were breaking rules/regulations. I asked you to point to the rules/regulations they were breaking, I meant specifics.

And you state that there is plenty of case law where this exact point is been decided against marketers/sellers. Give me an example that applies to this situation exactly. I don't think you will find any. Rebranding/renaming has been part of GPUs since the beginning. We look at the specs and internal SKU name(like GK104, GF100 etc) and we try to base our predictions about naming/price/performance etc on that. But the fact is, Nvidia can name the final product anything they like. That's where your whole legal argument falls down. Throughout the years, Both AMD and Nvidia have used different SKU's for different products and sometimes the same products have different SKUs. The final product name hasn't always matched up what We the tech crowd predicted.

When the GTX 680/670 came out there was a lot of shouting from the tech community because Nvidia used their midrange SKUs, the GK104. There was a lot of "outrage" about it. Charging $499 for a midrange SKU a disgrace, down with Nvidia etc. etc. Yet, no legal action, hmmm. odd that.

Just referencing your two examples, Sure, the law can be slow, but the law is much quicker against things like misleading marketing or misrepresenting your product. As both AMD and Nvidia can attest to. Both AMD and Nvidia got in trouble for this. AMD with their LLano GPU, they claimed it would be much better than it actually turned out to be and investors bought shares based on that information. AMD had to $30 million fine in 2017.

But the most relevant example might be the fine Nvidia got for misleading specifications/advertising. The GTX 970 had different specs than the ones they advertised. Do you really think that Nvidia are going to make a mistake like that again? Especially now when they are struggling because of huge overstocks of GPUs and AMD breathing down their neck?

So you see the law works pretty quickly in cases like this. On at least two previous occasions Nvidia used different names than the ones tech forums thought they would. The 680 GTX should have really been the 660GTX going by the previous release naming scheme. And the 1060 6GB and 1060 3GB had the same name despite a drop in performance that went beyond the memory difference.

That's the entire crux of the issue, the fact that they have changed the name to create the impression that the price hike is justified. The price they charge is a matter for them, but using marketing and naming to manipulate consumer sentiment and understanding of the product exposes them to legal issues.

ah, the crux of the matter as you say. You say they changed the name. Prove it. This is an entirely new lineup of GPUs. How do you know what they were going to call that GPU?

Nvidia, love them or hate them, they are extremely good at marketing. And that's all this is, marketing. There will be no legal issues.

See above. It is quite apparent that this is based on your personal views, in the real world consumers are entitled to assume truth in advertising. They are not obliged to do any research if they choose not to.

I wasn't indignant until I read this line. What a load of drivel. You talk about then real world and then write a statement like that. I asked you a question in my last post, but you didn't answer. If someone came to you about buying a new GPU, would you tell them to buy based on Nvidia's/AMD's slides and marketing or would you tell them to wait for reviews? Would you tell them just do into the store and buy it based on the specs on the box?

And that applies to anything, not just graphic cards. It's basic common sense. If you were buying a new TV, would you just rock into the store look at the specs and buy it purely on those specs? Or would you go check to see if there were any issues?

You know damn well that advertising can be completely honest and still not tell the whole story. And people make a mess buying things all the time because they don't get advice or do any level of research. That's the actual real world. And, you are right, nobody is obliged to do any research at all if they don't want to. I never said otherwise. Is that how you go through life? Would that be how you recommend anybody else go through life? No, I don't think so. Unless you are going lie just because it doesn't suit your narrative.

I make a point of posting on other sites about how AMDs drivers are superior to Nvidias. Which is true.Let's be honest. Even if they do, so many people are quick to sling out every Nvidia marketing line possible as to why they can't even consider an AMD card.

You'd think going based off online comment sections and forums that almost every home PC builder is a big time streamer who also wants to do some machine learning on the side. Also they only play DLSS/raytraced games.

Oh, and AMD drivers personally kicked their dog sometime in the past 10 years. Unforgivable.

The hate in response is entertaining. Also shows how well Nvidia has the average non [H] gamer snowed.

"How do you know AMDs drivers are horrible" - to average gaming forum NV shill complaining about AMDs software.

Response from NV shill... "Because I read it on this forum, posted by another gamer"

"Really, wonder what AMD cards they had?"

"Rage something.... they're all shit"

Pretty much how every one of those conversations goes.

Furious_Styles

Supreme [H]ardness

- Joined

- Jan 16, 2013

- Messages

- 4,594

Because proving objectively that one manufacturer's drivers are better than the other is pretty difficult. How are you proving that they are better?I make a point of posting on other sites about how AMDs drivers are superior to Nvidias. Which is true.

The hate in response is entertaining. Also shows how well Nvidia has the average non [H] gamer snowed.

"How do you know AMDs drivers are horrible" - to average gaming forum NV shill complaining about AMDs software.

Response from NV shill... "Because I read it on this forum, posted by another gamer"

"Really, wonder what AMD cards they had?"

"Rage something.... they're all shit"

Pretty much how every one of those conversations goes.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)