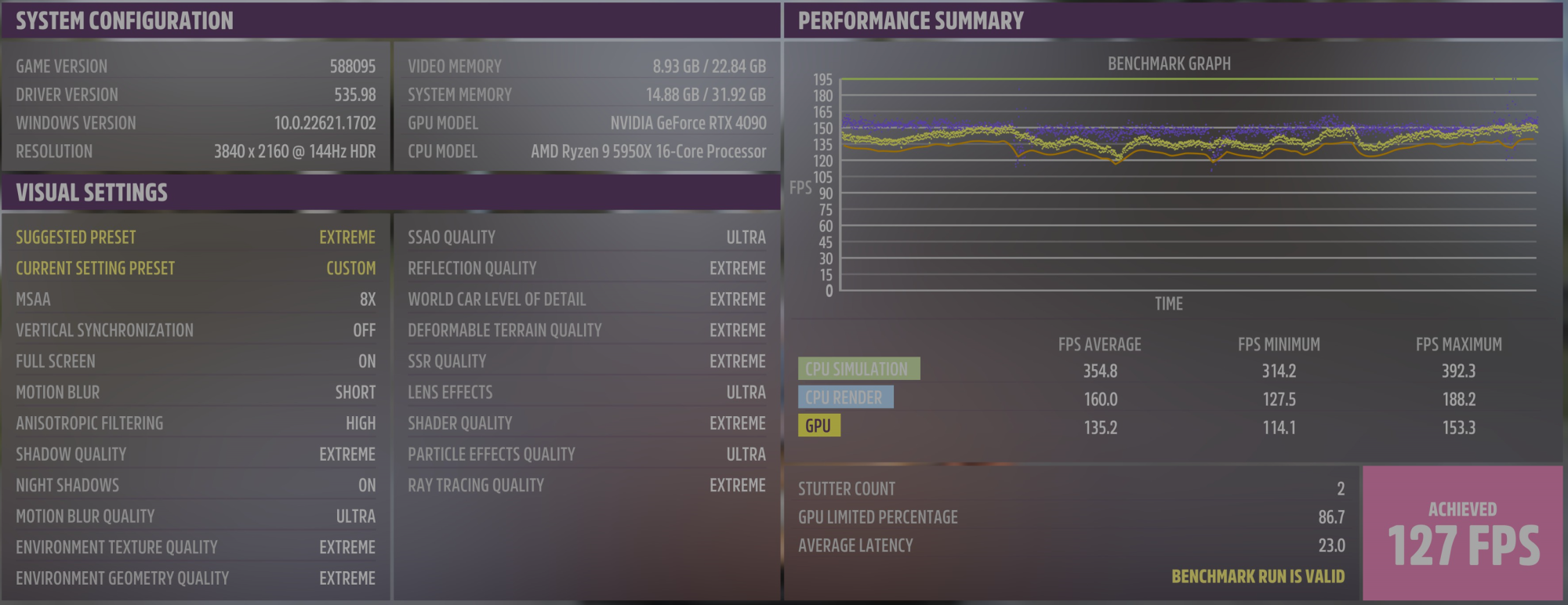

Doing an static all core overclock removes the ramping up and down of clock speeds. This tends to be the cause of microstutter. I'm running Linux and through much of the year I have distributed computing programs running so the CPU doesn't downclock so the microstutter isn't there. I use a program called CoreCTRL for monitoring and controlling my AMD video cards but it also has a "Frequency governor" for Ryzen CPUs. I originally had this set to On Demand which seemed to be the best setting between power savings (when not running heavy loads at least) and performance. Since it's summer and I'm not running any DC projects I started to notice microstutter and changed the setting from On Demand to Performance. The cores no longer clock under 3.8Ghz but my microstutter disappeared. I have since left it on this setting especially since power usage doesn't seem to be much higher as idle temps are almost exactly the same as before.So obvious fun fact... SMT off also decreased my temps by almost 10~15C when stress testing... lol. Now I can probably push this all core OC past 4.725Ghz to 4.8Ghz+ maybe... Interestingly, my MT scores (not that it matters much for gaming) were not lowered by as much as I would have guessed. Still got over 25K in CB23 (vs 31K) and CPUz quick test was 10.5K (vs. 13.6K). However, after a few days here I can confirm gaming is even smoother. Why this is not "well known" knowledge is beyond me. Honestly, debate over in my eyes from my testing.

The source of the microstutter may not have anything to do with SMT but instead power savings options.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)