Navigation

Install the app

How to install the app on iOS

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

More options

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

_Gea's latest activity

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).Release Notes for OmniOSce v11 r151050 r151050d (2024-05-31) https://omnios.org/releasenotes.html Weekly release for w/c 27th of May 2024. Security Fixes ncurses has been updated to version 6.4.20240309. Other Changes The algorithm for...

-

G_Gea replied to the thread Open-ZFS on OSX and Windows.In the meantime there are two problems discovered with Open-ZFS on Windows 2.2.3 rc5 - a compatibility problem with AOMEO backupper (crash after setup) - zfs get mounted does not return a value rc6 is underway to adress these please report all...

-

G_Gea replied to the thread Best HDD for long term storage solution?.Raid is a method to build an array from several disks with redundancy, ex a mirror. This allows a disk to fail completely without dataloss. Redundancy is the important item. With single disks and ZFS you can set copies=2 what means that every...

-

G_Gea replied to the thread Best HDD for long term storage solution?.For local long term storage with disks: - allow disks to fail without dataloss (Raid) - allow bitrot (ZFS with regular scrubs at least once or twice a year to repair detected bitrot due checksums on data and metadata) - allow the array to fail...

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).OpenSolaris, the source of Illumos was developped together, I would say around ZFS. This deep integration makes it very resource efficient. The point here is that Illumos/OmniOS is compatible (you can move pools) with Open-ZFS beside some...

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).https://omnios.org/releasenotes.html Unlike Oracle Solaris with native ZFS, OmniOS stable is compatible with Open-ZFS but with its own dedicated software repositories per stable/lts release. This means that a simple 'pkg update' gives the newest...

-

GNew release of ZFS on Windows zfs-2.2.3rc4, it is fairly close to upstream OpenZFS-2.2.3 with draid and Raid-Z expansion https://github.com/openzfsonwindows/openzfs/releases/tag/zfswin-2.2.3rc4 rc4: Unload BSOD, cpuid clobbers rbx Most of the...

-

G_Gea replied to the thread Napp-it cs web-gui for (m)any ZFS server or servergroups.Raspberry 4 can be managed remotely by the napp-it cs web-gui Just upload folder cs_server and start via perl /path_to_cs_server/start_server_as_admin.pl # uname -a raspberry4~192.168.2.89 Linux phoscon 5.10.103-v7l+ #1529 SMP Tue Mar 8 12:24:00...

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).Release Notes for OmniOS v11 r151048 r151048w (2024-04-11) Weekly release for w/c 8th of April 2024. https://omnios.org/releasenotes.html Security Fixes For Intel CPUs that are vulnerable to Native Branch History Injection,the kernel now takes...

-

G_Gea replied to the thread Napp-it cs web-gui for (m)any ZFS server or servergroups.How much RAM do I need for napp-it cs RAM for a ZFS filer has no relation to pool or storage size (beside dedup)! Calculate 2 GB for a 64bit OS, add 1-2 GB for a Solaris based filer and 3-4 GB for a BSD/Linux/OSX/Windows based filer for minimal...

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).The flavours of ZFS native ZFS in Solaris 11. This is the Unix where ZFS was developped for. The most resource efficient and stable ZFS and propably the fastest one. In 20 years I have not seen as many bug reports up to dataloss than on...

-

G_Gea replied to the thread Napp-it cs web-gui for (m)any ZFS server or servergroups.napp-it cs beta, current state (apr.05) Server groups with remote web-management: (BSD, Illumos, Linux, OSX, Windows): ok ZFS (pool,filesystem,snap management): ok on all platforms Jobs (snap, scrub, replication from any source to any...

-

G_Gea replied to the thread Napp-it cs web-gui for (m)any ZFS server or servergroups.Another day, another step: Replication between servers is basically working with problems on some combinations. https://forums.servethehome.com/index.php?threads/napp-it-cs-web-gui-for-m-any-zfs-server-or-servergroups.42971/page-3#post-420458

-

G_Gea replied to the thread Move away from unsafe FAT on USB sticks or disks, use ZFS.You can`t just give away a file on an USB stick to anyone like you can with *Fat* but that is not the point and for such I use a cloud link now. All of my systems have ZFS so I can just plug an external USB disk (can be 20TB) ex to my Mac, Linux/...

-

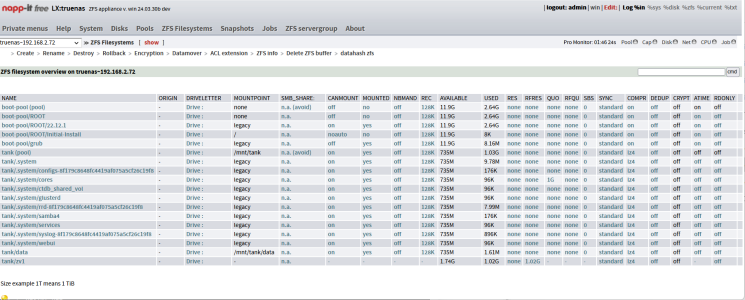

G_Gea replied to the thread Napp-it cs web-gui for (m)any ZFS server or servergroups.TrueNAS as a servergroup member - Enable SSH, allow root (sharing options) or SMB - Copy napp-it cs_server to a filesystem dataset ex tank/data (/mnt/tank/data) - open a root shell and enter: perl...

-

G_Gea replied to the thread All-In-One (ESXi/Proxmox) with virtualized Solarish based ZFS-SAN in a box).If you want to move your AiO setup from ESXi to Proxmox, read https://forums.servethehome.com/index.php?threads/napp-it-for-proxmox.32368/#post-419886

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).If you want to move your OmniOS AiO setup from ESXi to Proxmox, read https://forums.servethehome.com/index.php?threads/napp-it-for-proxmox.32368/#post-419886

-

G_Gea replied to the thread Move away from unsafe FAT on USB sticks or disks, use ZFS.There are use cases where you still need a *Fat* variant and a data loss or undetected bad file is not relevant. There are use cases where data security is the main concern like a secure data move via stick or backup via checksum protected zfs...

-

G_Gea replied to the thread Move away from unsafe FAT on USB sticks or disks, use ZFS.ok, but then say - no to checksums (no report on bad files) - no to Copy on Write (undamaged filesystems after a crash/remove during write) - no to transparent compress - no to transparent encryption - no to autorepair files on bad blocks with...

-

G_Gea replied to the thread OpenSolaris derived ZFS NAS/ SAN (OmniOS, OpenIndiana, Solaris and napp-it).A napp-it replication uses dedicated snaps (*_repli.._nr_n) and protects them from autosnap so normally you should have a common base snap. If not you cannot sync them again. Rsync can sync files but not make filesystems exact identical...

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)